A vendor once told me their AI platform detected and neutralised 99.7% of advanced threats in real time. Zero analyst intervention required. The pitch was polished. The demo was impressive. The dashboards were beautiful.

Six months after deployment, a credential-stuffing attack on the IT service desk exfiltrated 40,000 employee records. The platform was running the entire time.

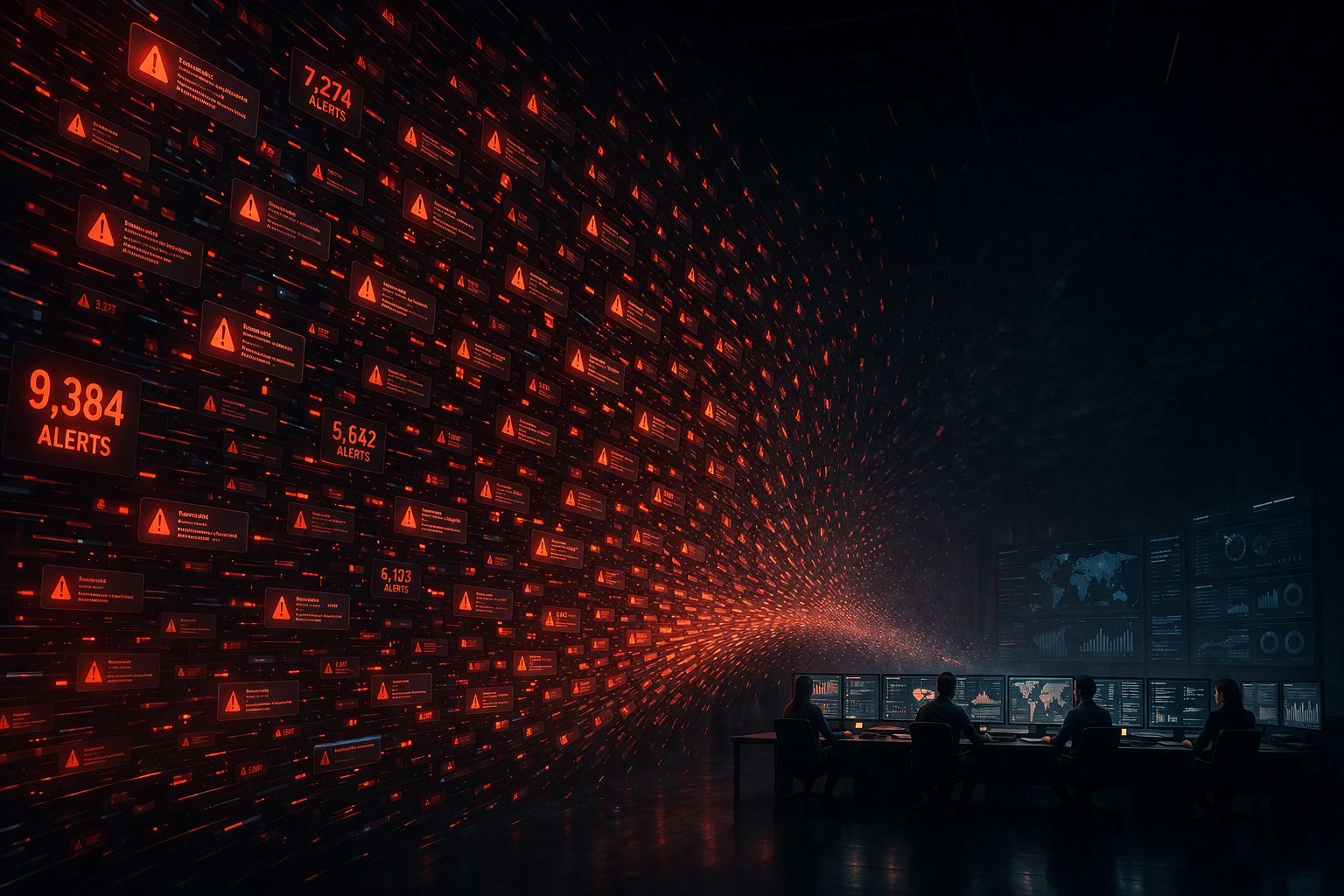

What went wrong was not the technology. The model had never been trained on social engineering attacks. The system generated 4,000 alerts per day that analysts had stopped reading. And the team had placed so much trust in the AI score that they had stopped questioning what it missed.

That gap between the vendor pitch and operational reality is where most cybersecurity crises actually begin. Not in the code. Not in the firewall configuration. In the human system behind the technology.

This post connects to themes I have explored in What Is AI Security and my posts on agentic AI threats and AI agent identity risks. The common thread across all of them is the same: technology is only as strong as the governance and leadership around it.

The Cybersecurity Leadership Crisis Nobody Talks About

Most organisations today have more cybersecurity capability than they have ever had. More tools. Bigger budgets. Better dashboards. Stronger compliance frameworks.

And yet breaches continue.

Not because defenders are lazy. Not because technology is useless. Because the real failure points are human. They live in delayed decisions, exhausted teams, unclear ownership, and a culture that rewards the appearance of security over the discipline of it.

The cybersecurity industry is deep in what Gartner calls the Trough of Disillusionment for AI in security. The hype peaked around 2022. The promises were enormous. The reality has been more complicated.

The cybersecurity leadership crisis is not about a shortage of tools. It is about a shortage of honest conversations about how those tools are actually being used.

– Avijit Patra

Where Crises Actually Start

Most incidents begin long before malware executes.

They begin when warnings are ignored and ownership is unclear. When overstretched teams become exhausted and start missing what they once would have caught. When leaders delay difficult decisions because disruption feels immediate while risk still feels distant.

They begin when accountability becomes political and reporting is designed to reassure rather than reveal.

By the time the technical event becomes visible, the damage path is often already in place.

I have seen organisations with average tools respond exceptionally well because leadership was clear, teams were trusted, and decisions were fast. I have also seen well-funded environments collapse under pressure because the human system behind the technology was weak.

The Four Failure Points I Keep Seeing

Delayed Decisions During Active Incidents

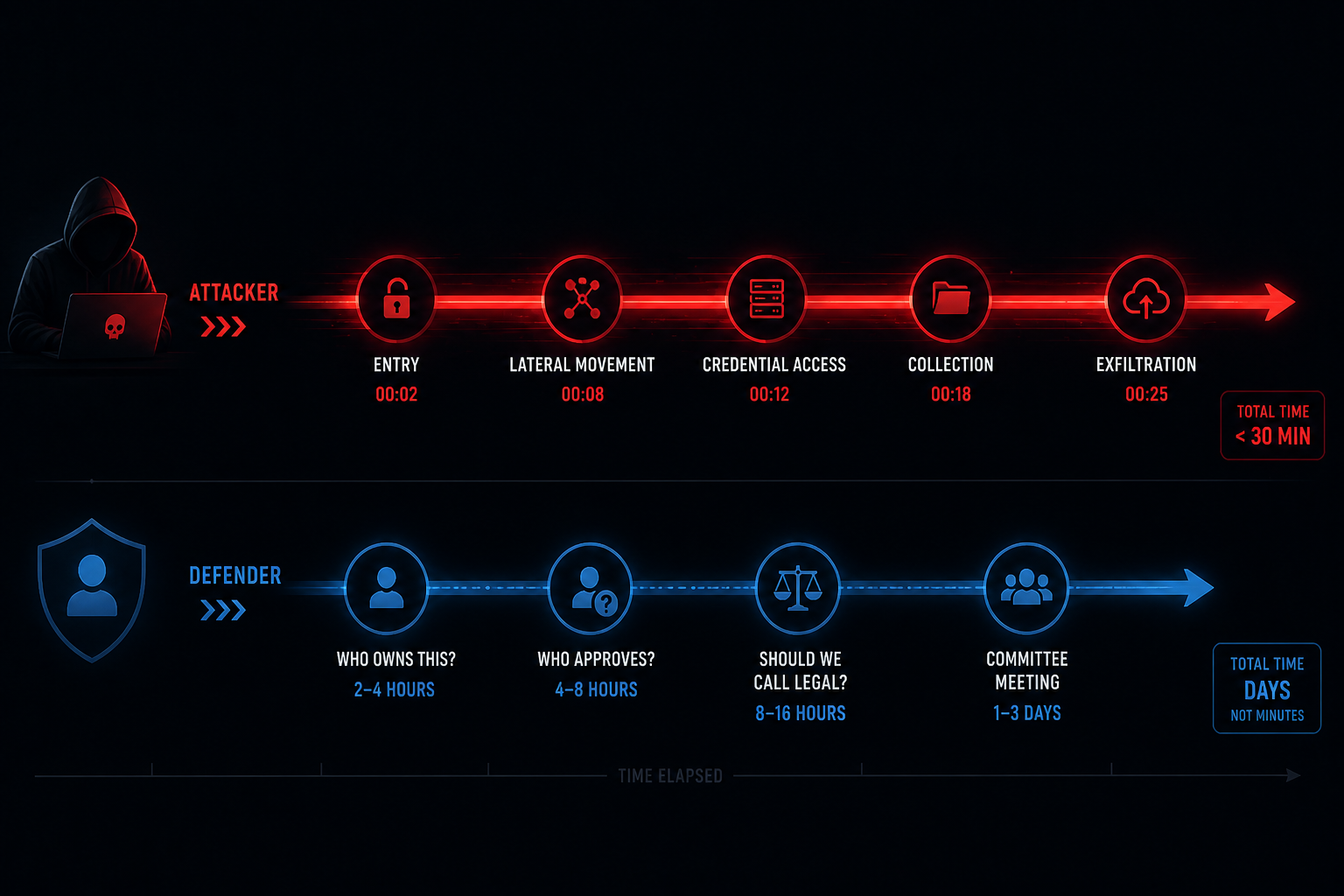

During a live incident, minutes matter. Yet many organisations lose valuable time asking the same questions over and over. Who owns this system? Can we isolate production safely? Who approves a shutdown? Should legal be involved now? Do we disclose immediately or wait?

While internal debate continues, the attacker keeps moving.

I have seen technically capable teams slowed not by lack of skill but by unclear authority and slow governance. The attacker moves at machine speed. Many organisations still govern at committee speed.

Burnout Inside Security Teams

Security teams are often expected to be permanently available, unfailingly accurate, endlessly calm, and somehow always understaffed. That model is unsustainable.

Over time, burnout creates turnover, missed signals, shallow investigations, and a culture that becomes permanently reactive. Fatigued teams may still function. They rarely function at their best when it matters most.

Think of it this way. A surgeon who has been awake for 36 hours can still hold a scalpel. That does not mean you want them operating on you. Security analysts in a state of chronic fatigue face the same problem. They can still work. But the quality of their judgment degrades in ways that are invisible until something goes wrong.

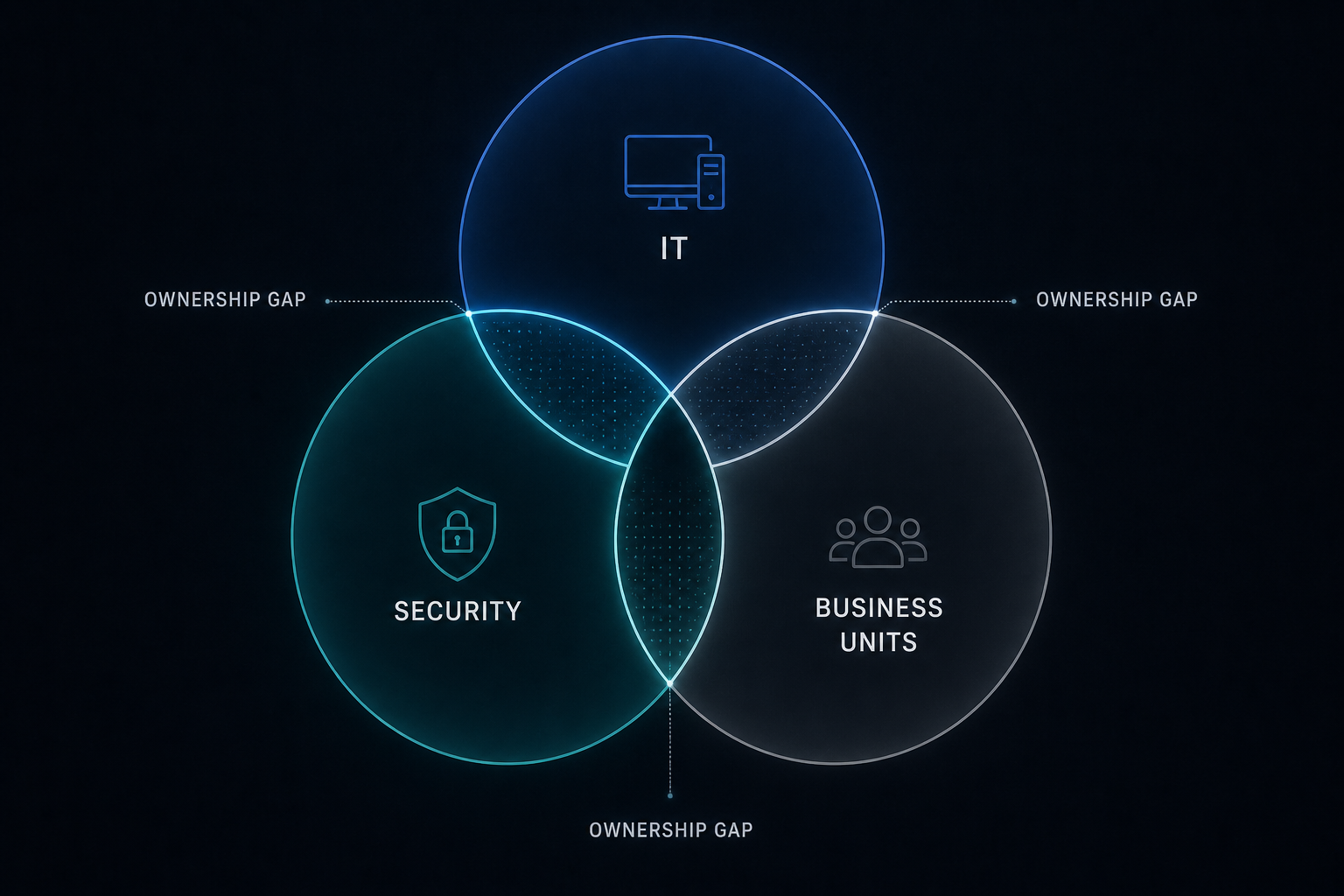

Ownership Confusion

Some of the most dangerous risks exist in the space between functions.

Cloud infrastructure may be owned by IT while risk sits with security, leaving nobody truly accountable for misconfiguration. HR may own onboarding while IT owns provisioning, and nobody fully owns offboarding risk. Business units adopt SaaS platforms faster than governance can keep pace.

Attackers regularly benefit from these gaps. Many breaches exploit the space between teams, not the weakness of any one team.

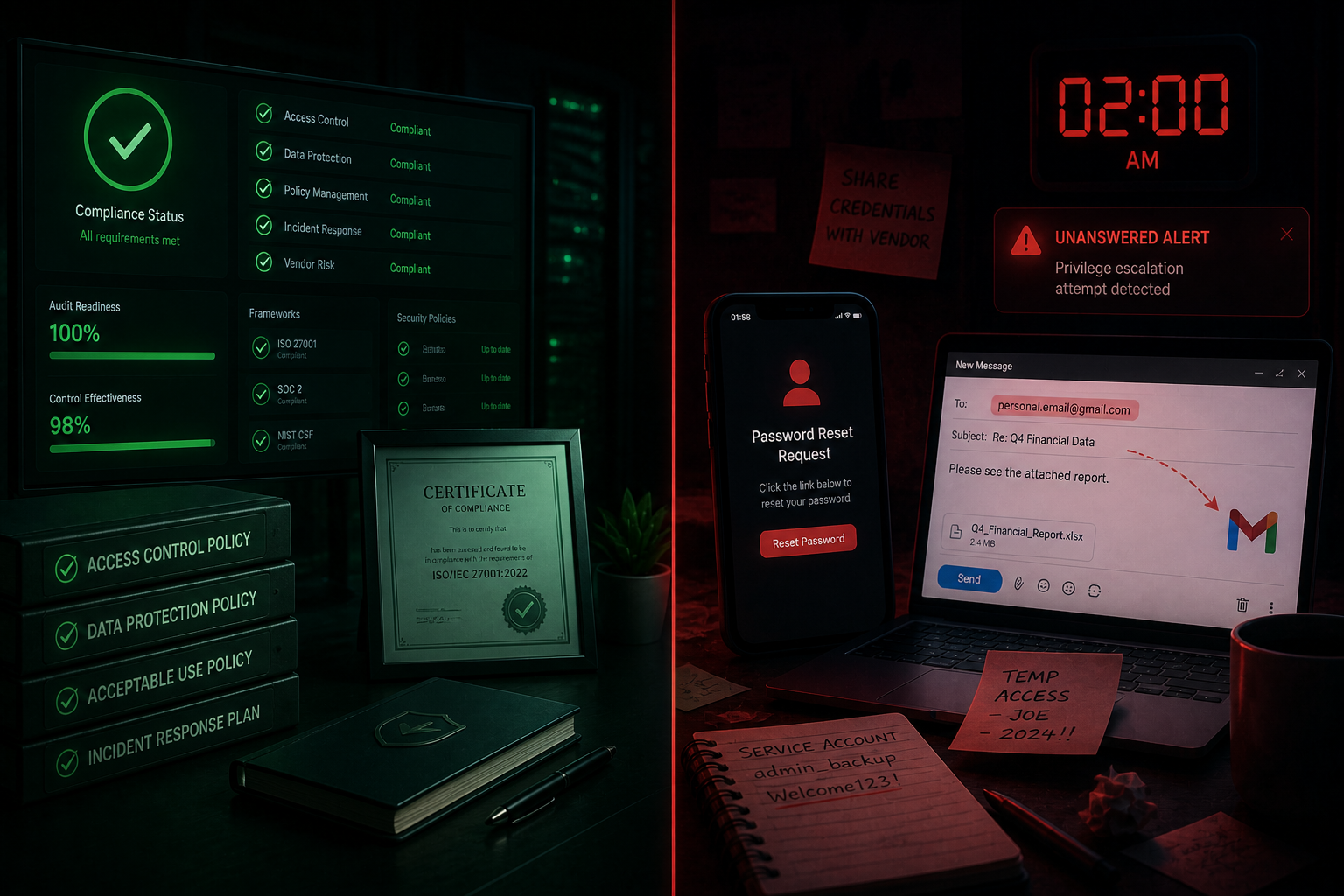

Security Theatre

Some organisations become highly efficient at looking secure. They optimise for passing audits, polished board dashboards, policy documentation, and vendor logos.

But those same organisations may neglect tested recovery plans, access discipline, incident readiness, and operational reality.

Compliant and secure are related ideas. They are not the same idea.

I have seen organisations tick every compliance box. ISO 27001. SOC 2. DPDPA. GDPR. And still get breached because their IT service desk reset passwords based on a phone call, users emailed sensitive data to personal accounts, and nobody acted on the AI alerts at 2am.

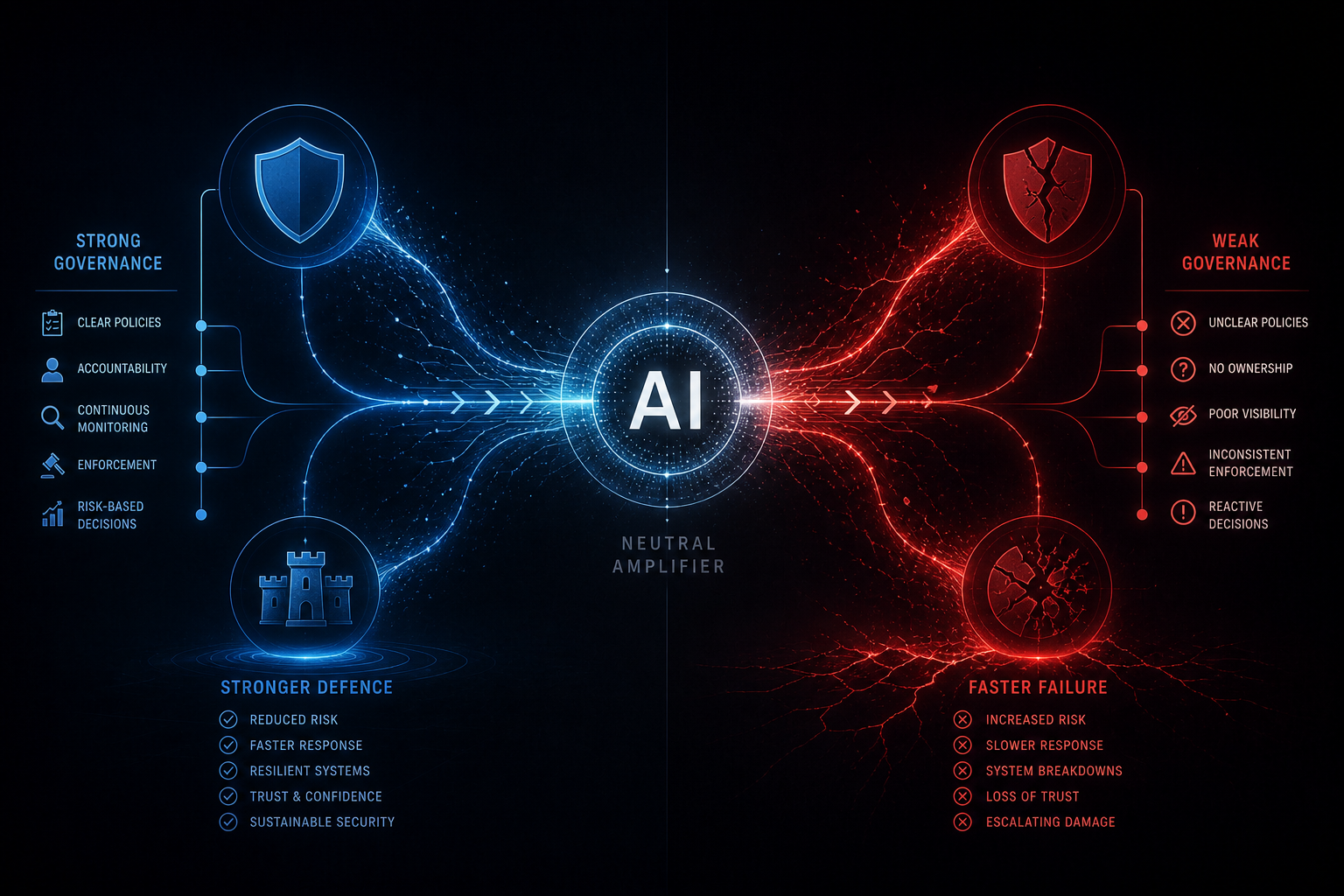

Why AI May Magnify the Cybersecurity Leadership Crisis

AI is creating real value inside cybersecurity. Used well, it improves detection speed, accelerates summarisation, supports triage, and reduces time spent on repetitive workflows. For overstretched teams, those gains matter.

But technology rarely enters a vacuum.

In strong organisations, AI enhances discipline. In weak organisations, it magnifies existing problems.

I have seen teams place too much confidence in automated scores without asking whether the underlying logic is sound. I have seen poor decisions made faster simply because they were automated. I have seen analysts rely on AI summaries instead of developing investigative depth.

At the leadership level, one of the most dangerous assumptions is believing a new tool has replaced the need for experienced talent. It has not.

– Avijit Patra

At the same time, attackers are using the same AI for faster phishing, better impersonation, and more convincing social engineering. Researchers have demonstrated a 5x increase in phishing click rates when emails are generated by AI versus written manually. In 2025, a UK finance employee transferred £25 million after receiving a deepfake video call that impersonated their CFO. The impersonation was flawless. The call lasted minutes. The damage took months to unravel.

What Strong Organisations Do Differently

The most resilient organisations are not the ones with the largest budgets. They are usually the ones with the clearest operating discipline.

Clear Decision Rights

During a crisis, confusion is expensive. Strong organisations know in advance who can isolate systems, who can approve emergency spend, who speaks externally, and who owns business continuity decisions. They do not wait for an incident to define authority.

I once watched a CISO rehearse a ransomware scenario with her full leadership team. When the real incident came four months later, the first three containment decisions took under five minutes. That rehearsal saved the company.

Incident Rehearsals

Mature organisations rehearse realistically. Their tabletop exercises are cross-functional, practical, and sometimes uncomfortable. They involve legal, HR, communications, finance, operations, and executive leadership. Not just the security team. Because real incidents rarely stay inside one department.

Security as a Business Function

Strong security teams understand more than controls and alerts. They understand revenue impact, operational dependencies, customer trust, and regulatory exposure. They translate technical risk into business language. That is often the difference between being heard and being ignored.

Sustainable Teams

Resilient organisations know exhausted teams are fragile teams. They invest in manageable workloads, training, growth paths, recognition, and rotation. This is not soft leadership. It is operational risk management.

Honest Reporting

Strong leaders prefer uncomfortable truths early over polished narratives late. They create environments where risks can be raised clearly, metrics reflect reality, and bad news is not punished. That honesty often prevents crises long before technology needs to.

I have seen a single honest escalation from a junior analyst prevent a breach that would have cost millions. The analyst felt safe enough to raise an uncomfortable concern. The leadership team acted on it within the hour. In a different culture, that same concern would have been filtered, softened, or delayed until it was too late.

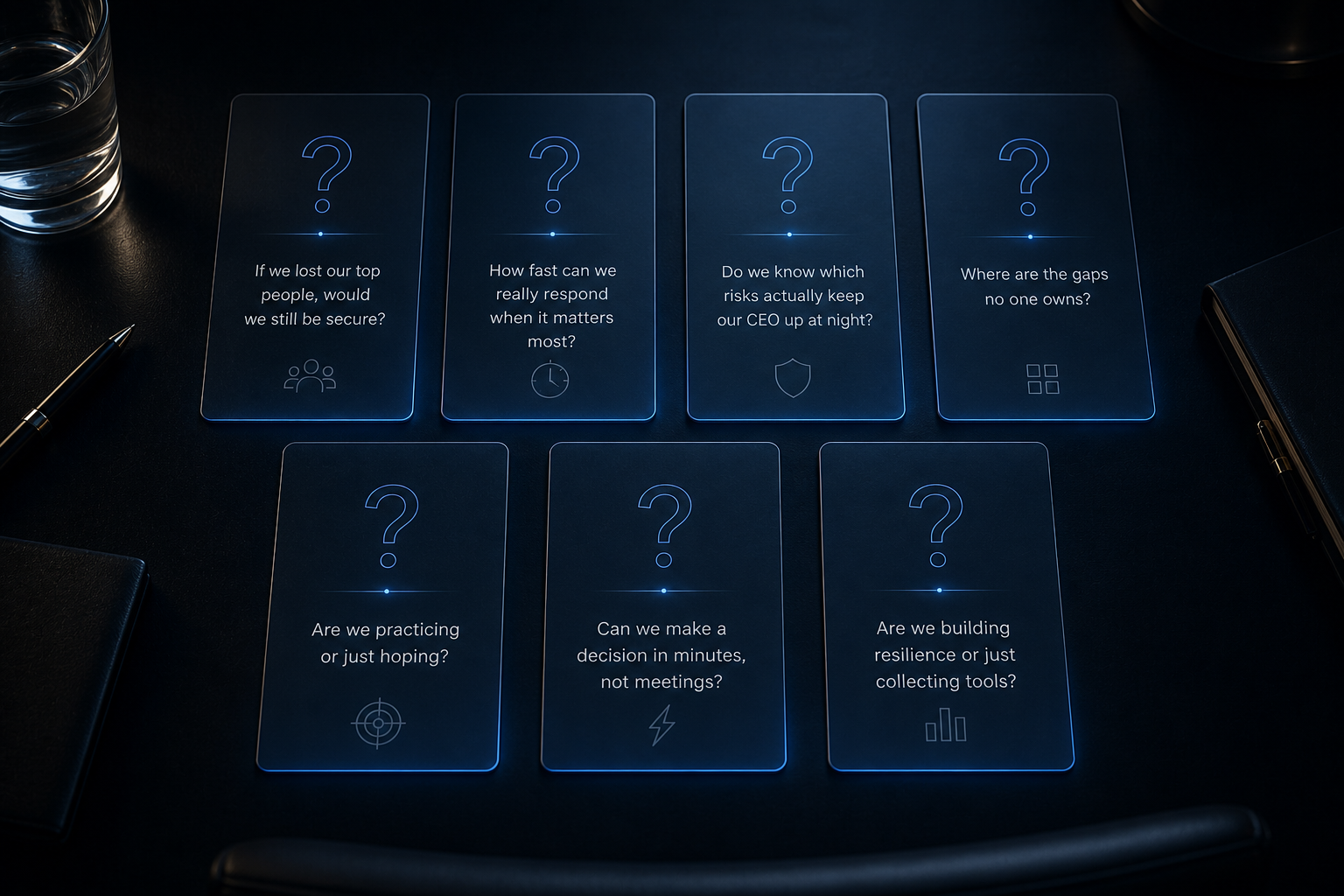

Seven Questions Every CEO Should Ask Right Now

Most leaders ask whether they have enough tools, enough budget, or enough coverage. Those are valid questions. But better questions reveal more.

- If ransomware hit tonight, who would make the first three decisions? In my experience, most leadership teams take two to three minutes of silence before anyone speaks. That pause tells you everything about how the real incident will go.

- How long would it realistically take to isolate critical systems?

- Which business process would fail first if key platforms went offline?

- Where are we dependent on one exhausted team or one irreplaceable individual? This is the question that makes people most uncomfortable. It is also the one with the most honest answers.

- Are current metrics showing operational reality or executive comfort?

- Who owns identity risk across the enterprise?

- Have we practised a real crisis recently, with real pressure and real trade-offs?

These are not technical questions. They are leadership questions. And in many organisations, the quality of these answers reveals far more than the size of the cyber budget.

The DPDPA Dimension: When Human Failure Becomes Legal Liability

For organisations operating in India, the human failures described in this article are not just operational risks. They are legal ones.

The Digital Personal Data Protection Act requires organisations to demonstrate clear governance over how personal data is handled. When a breach occurs because an exhausted analyst missed an alert, or because nobody owned the offboarding process, or because leadership delayed a containment decision, the DPDPA does not distinguish between technical failure and human failure.

The organisation is liable regardless.

Under DPDPA, penalties can reach up to ₹250 crore. The law does not care whether the breach was caused by a software vulnerability or a leadership vacuum.

The Crisis That Technology Cannot Solve

The next era of cybersecurity will not be won by whoever buys the most tools.

It will be won by organisations that strengthen the capabilities technology cannot create on its own. Trust under pressure. Clarity in chaos. Accountability across silos. Resilient teams. Disciplined execution. Leaders who act early.

Technology will continue to matter. Modern security operations depend on strong platforms, visibility, automation, and intelligent controls. But many organisations have already learned a harder truth.

The organisations that navigate this well will not be the ones with the most impressive dashboards. They will be the ones where someone in the room had the courage to say the uncomfortable thing before it was too late.

– Avijit Patra

About the Author

Avijit Patra is a cybersecurity and AI governance expert who helps organisations navigate the intersection of technology adoption and risk management. He speaks at academic institutions and industry events on AI security, digital trust, and the human realities of cybersecurity. This article draws from a guest lecture delivered to a Computer Science faculty series in 2026. Book a free conversation with him at avijit.in.